Jobs can be submitted from WranglerView using the items under the Submit menu. Selecting one will launch a modal submission dialog with the standard framework and job-specific parameters. Specify the fields and then press the "Submit" button to submit the job to the Qube! Supervisor.

Submissions for specific applications, such as Maya and Nuke, are explained in detail in their own section. Please refer to that documentation for submissions to individual applications. This page documents the complete set of parameters, which may not be needed or be exactly the same for individual applications.

Buttons

There is a common set of buttons located at the bottom of the Submission Dialog.

- Set Defaults: Store as defaults in the User Preferences for that Jobtype, the values listed in the current submission dialog. The interface will use those values the next time the dialog is opened. This allows you to specify common fields like Priority or Executable that should always be the same value.

- Clear Defaults: Remove any stored defaults for that Jobtype submission dialog

- Expert Mode: Toggle button to display or hide export and non-default values from the submission dialog to reduce clutter when there are a lot of parameters. The current state of this toggle is stored in the Preferences Dialog.

- Save [Disk Icon]: Store the current properties of the job from the dialog as a file (by default an XML file). This can be used to submit through the WranglerView at a later time with "Submit->File…" or from the command line with the

qbsubcommand, or via the API. - Cancel: Cancel the job submission and close the dialog.

- Submit: Submit the job with the specified parameters to the Qube! Supervisor.

Basic Parameters

Basic Parameters for all Jobs:

- Name: name used for the job, usually artist specified.

- Priority: priority of the job. A number between 1 and 9999. Lower numbers mean higher priority.

- CPUs: The number of processes (or subjobs/instances) to run the job with at the same time. When rendering, this equates to the number of frames being rendered at the same time.

Render Thread and Job Reservation Controls

This section is located near the top of the submission UI, and is collapsed when 'Expert Mode' is not selected. For applications that do not support setting the number of threads, this section is not visible at all.

Enabling 'Expert Mode' at the bottom of the UI will open this field up

Thread Control Behavior:

Checking Use All Cores for applications or renderers that support auto-detection of the number of cores installed on a worker host will set the renderer's appropriate control to enable this feature. In the case of a Maya job, it sets renderThreads=0; for the Mentalray renderer, it sets autoRenderThreads=True. It will try to "do the right thing" for each application where this control is visible.

Checking Render on all cores will also sets the job's reservation string to reserve all cores: you will set a "+" at the end of the host.processors=N+ . This means "only start on a worker with N free slots, but reserve them all, so that no other jobs will start on this worker while I'm running on it". The Min Free Slots value will affect the value of N, so setting it to 4 will say "start only on a worker with 4 free slots".

Render on all cores also disables the bottom-half of this section, Specific Thread Count and Slots=Threads, whose behavior is explained below.

Setting Specific Thread Count, on the other hand, does not necessarily reserve all the cores on the worker, it only sets the renderer's particular number of threads control, in Maya's case it's Render Threads:

But you will notice that the job's reservations have not changed, and is probably still at the default value of host.processors=1. This is to support workers which are configured for fewer slots than they have cores.

The Slots=Threads control will link the job reservations to the thread count, so a 4-threaded job will reserve 4 worker slots. It also disables the Render on all cores and Min Free Slots controls.

As you change the Specific Thread Count control the reservations value will automatically update.

Parameters for Cmdline Jobs:

- Command: The command to run on the Worker. Paths and syntax should be what the Worker's OS expects, not the submitting machine.

- Shell (Linux/OS X): Specify the shell to use when executing the command line on the Worker. Only visible in Expert Mode.

Parameters for Cmdrange Jobs:

- Command: Same as the Cmdline Job, though it will substitute the following strings based on the frame being executed for the given task.

- QB_FRAME_NUMBER

- QB_FRAME_START

- QB_FRAME_END

- QB_FRAME_STEP

- QB_FRAME_RANGE

- Range: The frame range to execute.

- Execution

- Individual Frames

- Chunks with n Frames

- Split into n Partitions

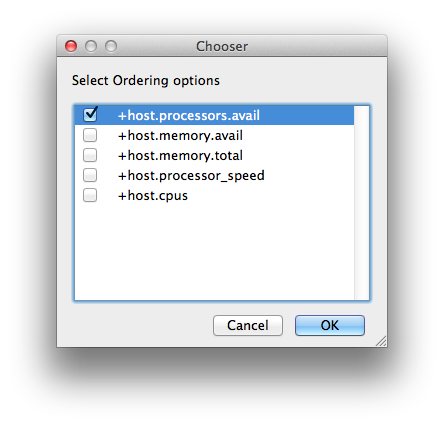

- Ordering: Specify whether tasks should be executed in ascending, descending, or binary sort (first, last, middle, split the middle values, …) order.

Common Parameters for SimpleCmd Jobs:

- All the fields for either Cmdline or Cmdrange jobs (see above), plus

- Cmd Template: String used to construct the command line along with the rest of the job parameters. Python string representations are used, e.g. %(val)s to represent the string value from "val". If listed, %(argv)s places all optional arguments at that location instead of at the end. This constructs a command line that is then used by the Cmdline or Cmdrange jobtypes on the Workers. See above for the string replace values for CmdRange.

- Executable – path to the renderer or executable to run. Unless it is in the path on the Worker's environment, this needs to be set to an absolute path (to where the executable is located on the Worker)

Detailed Parameters

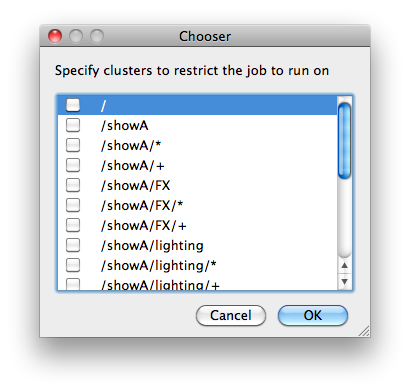

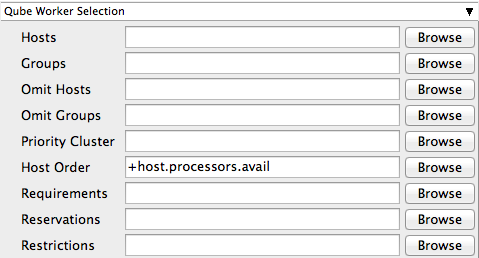

Qube Worker Selection

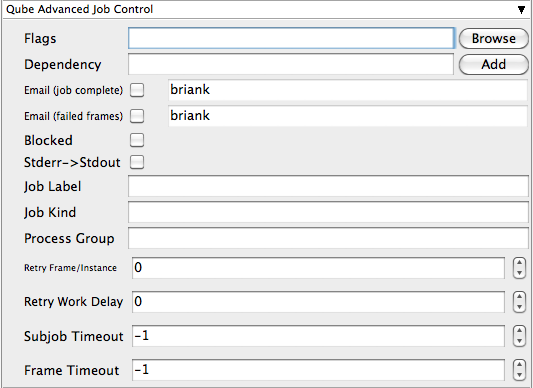

Qube Advanced Job Control

Qube Job Environment

- Cwd: (Current Working Directory) Explicitly specify the current working directory where the Worker should start the process

- Env vars: (Environment Variables) Explicitly set specific environment variables that should be used when running a job process on the Worker.

- Impersonate User: Submit job as specified user. Default is current user. Format: <optionaldomain>\<username> (advanced – requires Impersonate user permission)

Qube Output Parsing and Validation

- regex_highlights: Regular expression for highlighting information messages from stdout/stderr

- regex_errors: Regular expression for identifying fatal errors from stdout/stderr

- regex_outputPaths: Regular expression for identifying outputPaths of images from stdout/stderr

- regex_maxLines: Maximum number of lines to store for regex matched patterns for stdout/stderr

- validate_fileMinSize: Minimum size for identified outputPaths (in bytes). [0 disables test]

Qube Actions

- generateMovie: add linked job to generate movie from output images. It will launch a second submission dialog to convert the images to a movie. This addresses the common action chain of rendering images and then converting those images into a Quicktime or similar movie. Note: This function will only work on jobtypes that retrieve the output image filenames into Qube (Maya, 3dsMax, Softimage, AfterEffects, etc).

Shotgun Submission

- Login: login username for Shotgun

- Task: Shotgun task

- Project: Shotgun project

- Shot/Asset: Shotgun shot/asset

- Version Name: Shotgun version template. Performs variable substitution using python format %(key)s to get the value. The dict keys are prefixed with "job.", "package.", and "shotgun." respectively.

- Description: Shotgun description. Performs variable substitution using python format %(key)s to get the value. The dict keys are prefixed with "job.", "package.", and "shotgun." respectively.

Qube Notes

- Account: Arbitrary accounting or project data (user-specified)

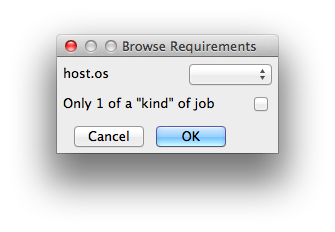

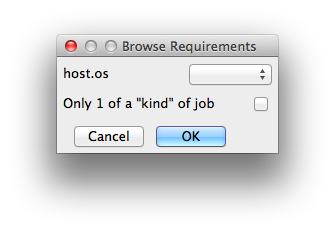

- Kind: Arbitrary typing information that can be used to identify the job - see How to restrict a host to only one instance of a given kind of job, but still allow other jobs

- Job Notes: Freeform text for making notes on this job

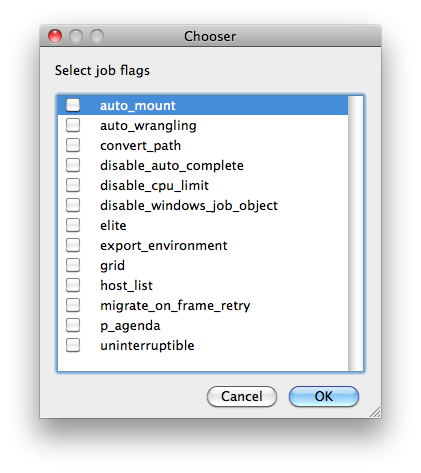

Job Flags

Flag | Value | Description |

|---|---|---|

auto_mount | 8 | Require automatic drive mounts on worker. |

auto_wrangling | 16384 | Enable auto-wrangling for this job. |

| convert_path | 131072 | Automatically convert paths on worker at runtime. |

disable_auto_complete | 8192 | Normally instances are automatically completed by the system when a job runs out of available agenda items. Setting this flag disables that. |

disable_cpu_limit | 4096 | Normally, if a job is submitted with the number of instances greater than there are agenda items, Qube! automatically shrinks the number of instances to be equal to the number of agenda items. Setting this flag disables that. |

disable_windows_job_object | 2048 | (Deprecated in Qube6.5) Disable Windows' process management mechanism (called the "Job Object") that Qube! normally uses to manage job processes. Some applications already use it internally, and job objects don't nest well within other job objects, causing jobs to crash unexpectedly. |

elite | 512 | Submit job as an elite job, which will be started immediately regardless of how busy the farm is. Elite jobs are also protected from preemption. Must be admin. |

export_environment | 16 | Use environment variables set in the submission environment, when running the job on the workers. |

expand | 32 | (Deprecated in Qube6.5) Automatically expand job to use as many instances as there are agenda items (limited by the total job slots in the farm). |

grid | 4 | Wait for all instances to start before beginning work (useful for implementation of parallel jobs, such as satellite renders). |

host_list | 256 | Run job on all candidate hosts, as filtered by other options (such as "hosts" or "groups"). |

| 1024 | Send e-mail when job is done. | |

migrate_on_frame_retry | 65536 | When an agenda item (frame) fails but is retried automatically because the retrywork option is set, setting this flag causes the instances to be migrated to another worker host, preventing the frame from running on the same host. |

| no_defaults | 524288 | Prevent supervisor from applying supervisor_job_flags |

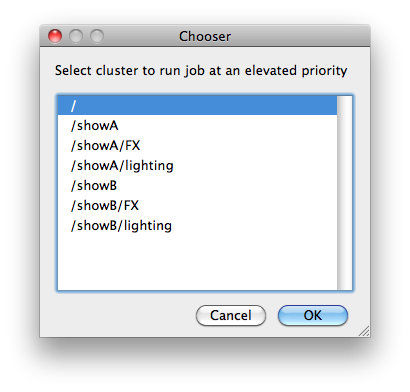

p_agenda | 32768 | Enable p-agenda for this job, so that some frames are processed at a higher priority. |

uninterruptible | 1 | Prevent job from being preempted. |